Thanks to all of our amazing contributors.

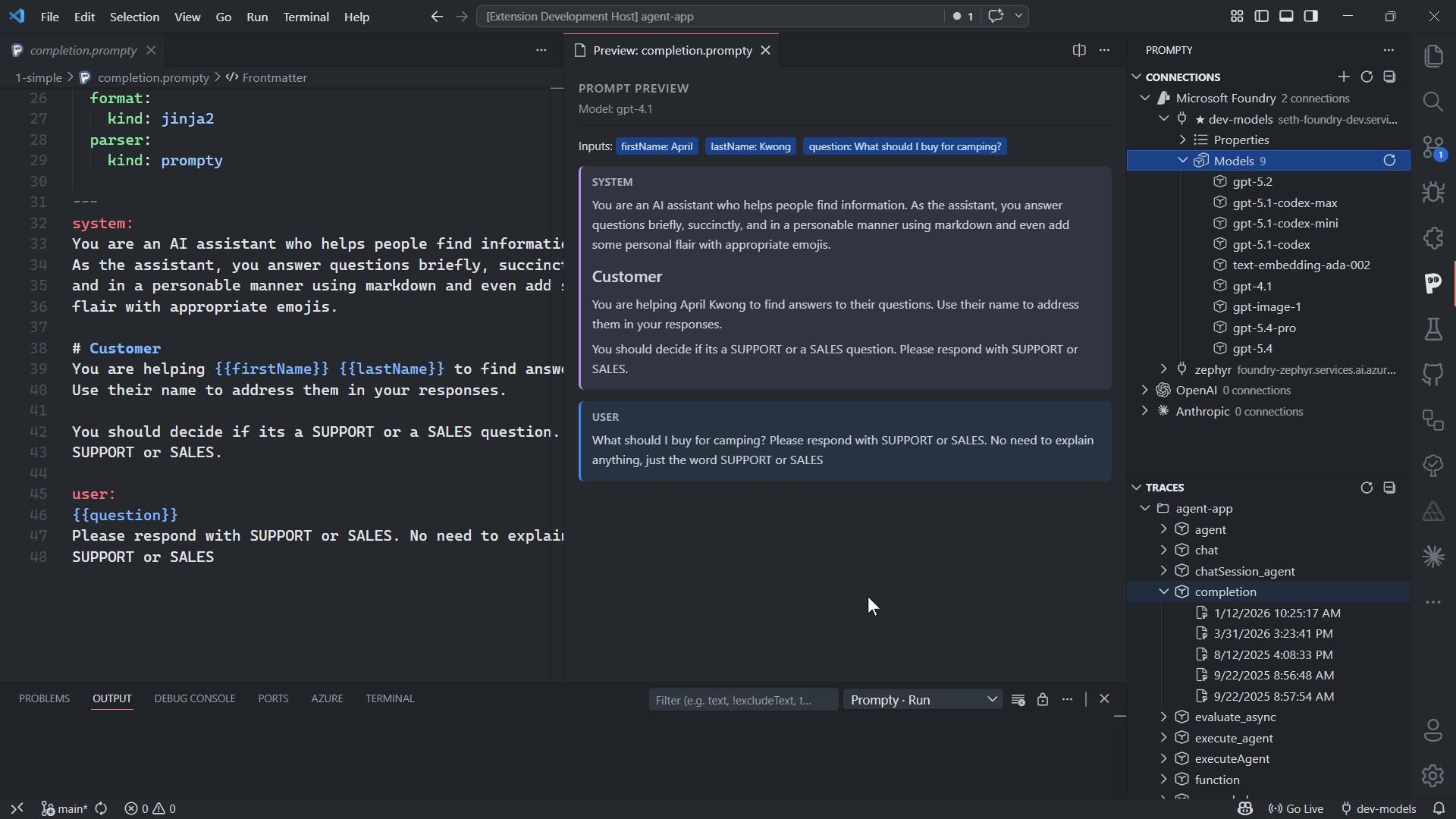

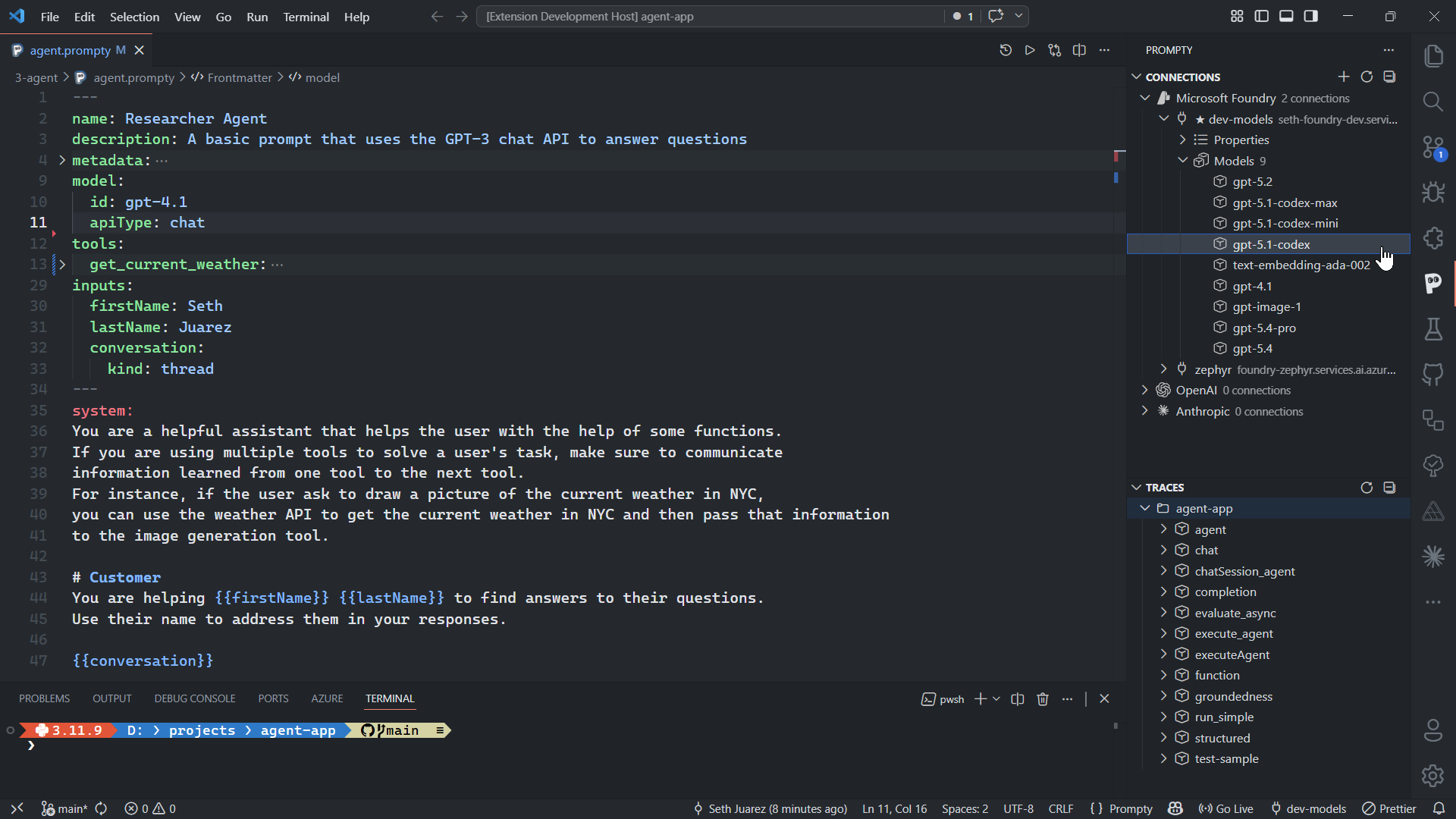

A .prompty file pairs YAML frontmatter with a markdown prompt body

in a single, portable asset. Define your model, inputs, tools, and template —

then execute it from Python, TypeScript, or VS Code with one command.

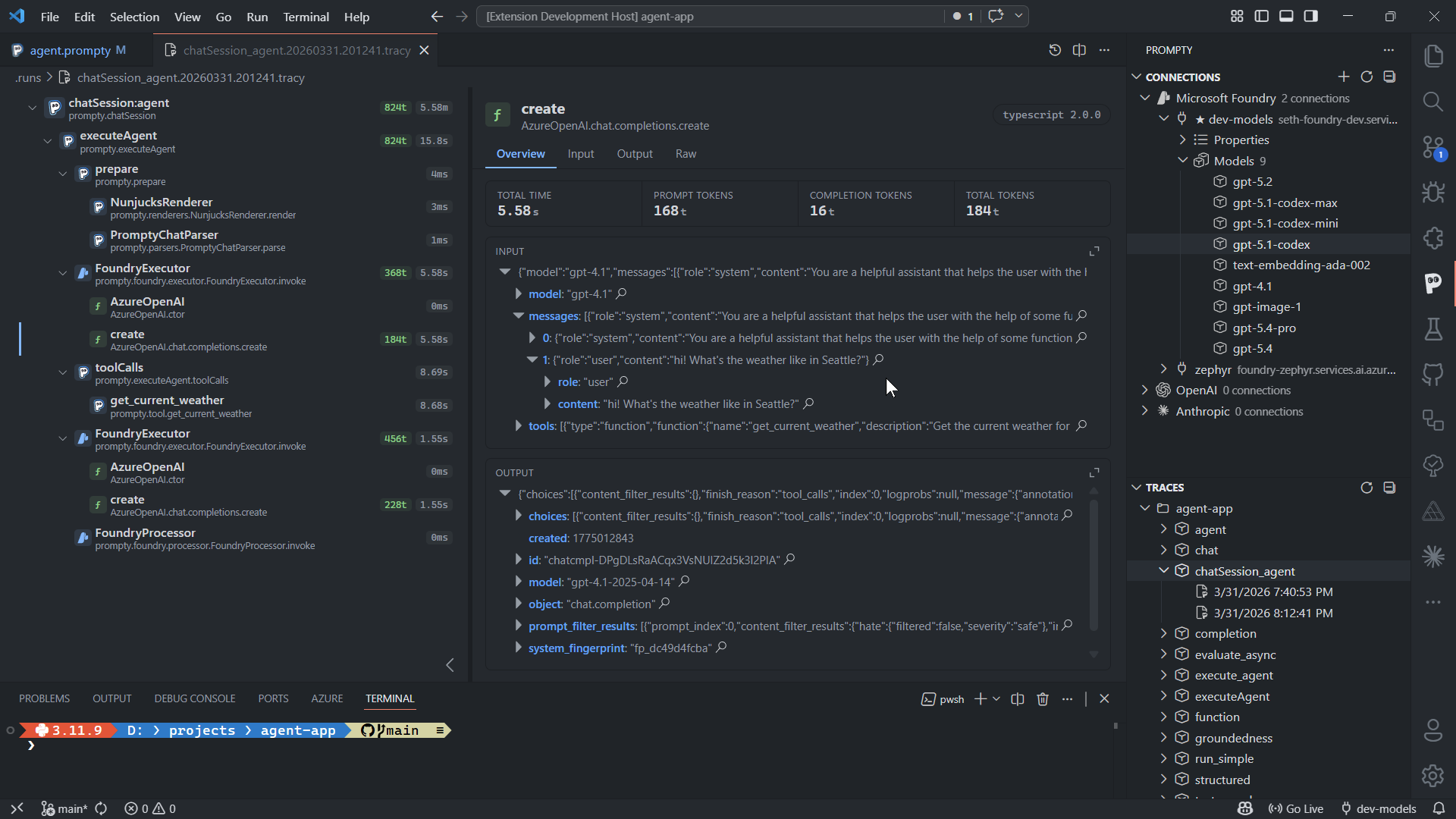

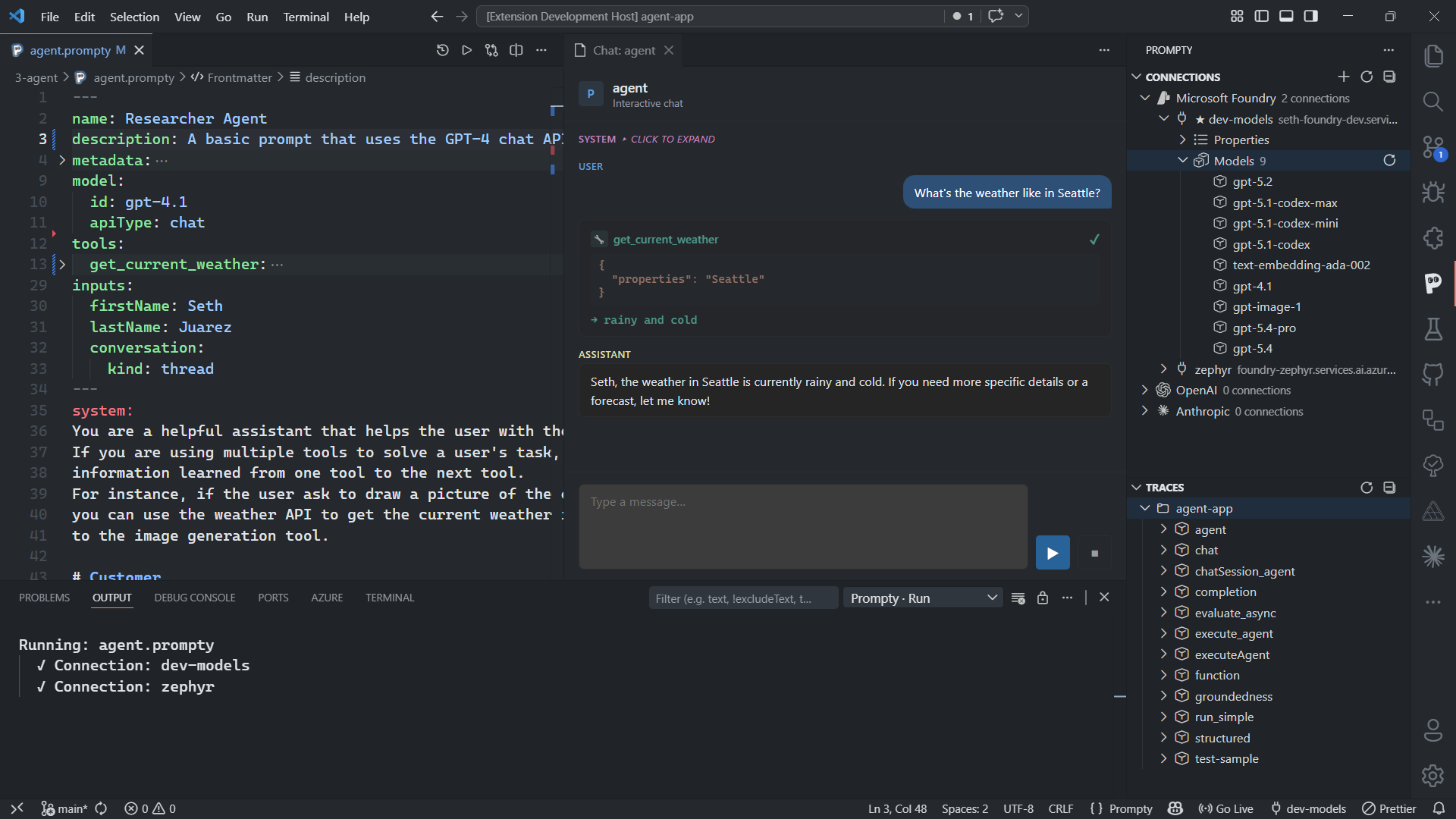

The Prompty VS Code extension is your prompt engineering workbench. Write prompts with full language support, run them with F5, and inspect every detail in the built-in trace viewer.

Every execution produces a detailed trace — messages sent, tokens used, latency, and the raw API response. Debug prompt issues in seconds, not hours.

@trace for end-to-end visibility

Prompty processes every prompt through a four-stage pipeline — each stage is swappable and independently traceable.

Same .prompty file works across Python, TypeScript, and VS Code — no rewriting.

OpenAI, Microsoft Foundry, Anthropic — or build your own executor in minutes.

Built-in tracing with pluggable backends — console, files, or OpenTelemetry.

Define tools in your frontmatter and let the runtime handle the tool-calling loop automatically. Supports streaming, structured output, and the OpenAI Responses API.

Prompty is built on the premise that even with increasing complexity in AI, a fundamental unit remains prompts. The format is open, extensible, and designed to grow with the ecosystem.

Thanks to all of our amazing contributors.